Using a context map checklist

At Glofox, we regularly perform whole-team exploratory testing sessions. These are very effective at discovering problems that are not found when testing around acceptance criteria, as we get multiple different perspectives, and it gives developers the headspace to explicitly perform exploratory testing in a way they might not when focused on writing the code. However, I'd observed that there were occasions when we would discover bugs that arguably should have been discovered earlier in the process, either when user story mapping or during 3 amigo sessions. These would take the form of missed use cases or inconsistency in behaviour between applications, or inconsistency in behaviour within the application, for example with unsuccessful transactions.

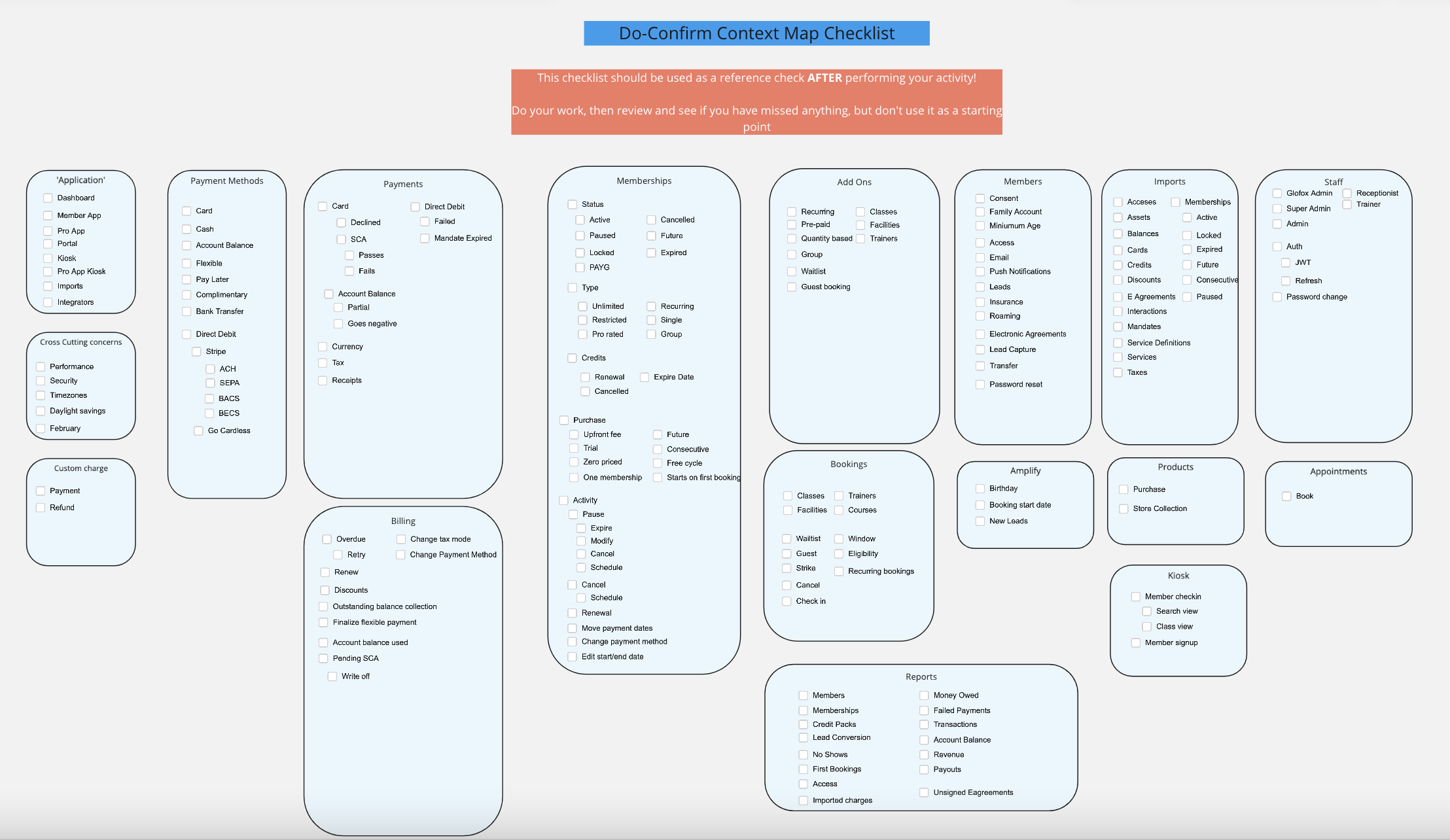

I'd recently read the excellent 50 Quick Ideas to Improve your Tests, and one of the items that stuck out was using risk checklists for cross-cutting concerns. This entails using a 'Do-Confirm' checklist as outlined in the Checklist manifesto, where instead of using a checklist to tick off items as people work, you use the checklist as a review aid - ie, do the work, pause and review to see if anything was missed.

This gave me the idea of creating a checklist that would list out the main domain and sub-domain areas within our apps. One of the other items mentioned in the book is that "good checklists should not aim to be comprehensive how-to guides" - with that in mind, I felt it was important not to create an exhaustive checklist that would list all the functionality, but to merely highlight the main areas - getting the right level of detail is tricky but important! Too high level and it's not valuable enough, too low level and you get lost in the detail - you need to be able to scan it relatively quickly.

So this is what we came up with:

As noted at the top, we want people to try to recall functionality/domain knowledge first, and then refer to the checklist; we don't want people's focus to narrow to what is in the checklist as it is absolutely not complete (we can't list everything), but it acts as a reminder of parts of the overall Glofox domain that some people may not be as familiar with.

Historically testers (in addition to providing such things as a differing perspective and applying critical thinking) have been valued for being perceived as having deeper domain knowledge than developers - I think we need to change how we work in order to improve the domain knowledge of everyone in the team without making ourselves the single point of failure regarding domain knowledge - what happens if a tester is off on holiday? I think this is one way to help.